Types of Assessment Errors

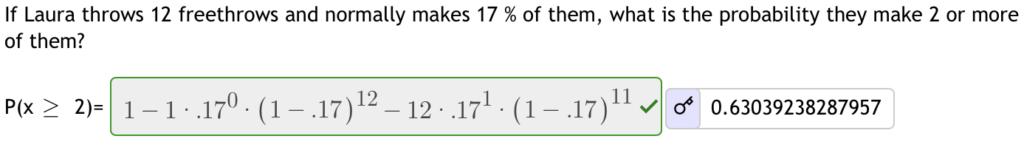

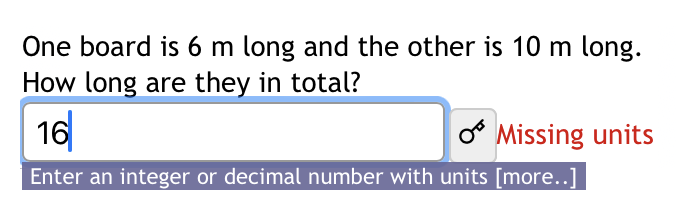

A “technically wrong” answer on an assessment may not show a lack of content knowledge, but rather a misunderstanding, a mistake, or a technicality. These other errors can, in places, be important as well, but often take the student’s focus away from understanding the content and towards various test taking techniques. Here are 4 distinct types of errors students can make on assessments, and some ways professors help avoid them.

1. Not knowing the content

Whether the student “knows” the content is generally what the professor is trying to assess. An answer left blank or incomplete is a good indication of this type of error, but students may just lack some specific piece of knowledge to get started or simply ran out of time. If the question only requires a box to be selected, like multiple choice and matching questions, the content knowledge can be faked. Whereas questions that require an answer to be written in some way are impossible to start or guess without some level of content knowledge.

One way to help reduce the number of incomplete questions and determine exactly what the student understands is to use multi-step questions. Adding a first step, where the student can identify the method they will use, a “trig substitution” or “two-sample dependent t-test” for instance, can help separate the “all important” first step, from the rest of the work. Steps can also act as reminders and guides, which can be good for formative assessment, but maybe not ideal for summative assessment. For summative assessments full rough work solutions can be included with each question to give insight when the final answer is incorrect.

2. Misreading

If a question is misread, or misinterpreted, the answer can still be correct, just for the wrong problem. Perhaps the question began a similar way to a practice problem, so the rest was skipped to save time or technical language left a student confused about a complex setup. Either way the professor is now assessing the student’s ability to interpret text, and not their ability to solve a specific problem. The resulting misunderstood problem can also be significantly harder, or easier than intended making assessing the students knowledge of the content near impossible.

Ensuring students have access to live support during their assessment can greatly reduce comprehension errors. Whether verbally or even just through chat, just asking the question can help prompt better understand.

Having multiple representations of the question, including diagrams and symbolic representations, in addition to text, can ensure students fully understand the question. This might also be an opportunity to help students connect the different representations. Alternatively, students can be asked to draw or explain their understanding before proceeding, which is also a good life skill.

3. Calculation mistakes

Once a question is started mistakes and small calculation errors can creep in. These errors could be entering a number wrong into a calculator, writing down “71” instead of “17”, mistaking a negative sign for an eraser mark, or confusing a Z with a 2. Ideally students will recognize these if there is context to judge their answer, “Timmy’s final walking speed is not 247km/h”, but stress and other factors can give little time or headspace for these kinds of checks.

If the main goal of an assessment is to interpret a problem and identify the correct tool to solve it then have that also be an answer. They can show the calculation they would solve as an answer, rather than confounding their calculation abilities with their content knowledge.

4. Technicalities

Technicalities are some of the worst ways to get a computer graded assessment wrong. The student, and maybe even the professor, knows the student understands the concept “but technically…” it is wrong because the units, or decimals, or some other feature are incorrect. A question that reads “solve this complex problem, and put the correct units and significant digits” just reads as “solve the problem, deal with the technicalities later” to a student during a test. The time frame between starting the problem and getting to the “technicalities” can be long enough that they forget about it completely.

Moving technicalities closer to the answer box, or showing reminders only if an entry error is made, can reduce clutter and confusion in the actual question. This allows the students to tackle the comprehension and calculation, and separately the technicalities, allowing students to tackle one error type at a time.

General ways to avoid “technical errors”

For many subjects and question types all four of these errors get thrown together, and it’s often difficult to pull them apart. Assigned separate marks, or part marks, to each type can help, and capping the marks lots to “dumb errors” can help focus on the content knowledge.

An introductory digital homework can focus on only the assessment system’s syntax and process, before needing to apply new content knowledge. With questions like “enter this number to 4 decimal places”, or “enter this equation” or “draw this diagram”, can also ensure students have the basic skills and understanding to succeed. This can also be a place to introduce or test key course details like due dates, Late Passes etc.

![This class allows the use of 10 LatePasses. If you have a LatePass and the assignment allows using LatePasses,

you can click on Use LatePass next to the assignment link. This will extend the due date by 24 hours. There is

no penalty for using Latepasses.

How to use a LatePass 2 [+]

If you use a LatePass, the assignment will appear on the calendar on the new due date.

Late passes can't be used to complete work beyond the course end date.

To review, answer the questions below.](https://bowties.followthemop.ca/wp-content/uploads/2021/08/Late-Passes.png)

Another way to avoid many non-content related errors is to give all students additional/double time. Some accessibility requirements grant certain students additional time on tests and exams, but not all students can be properly diagnosed or accommodated. Unless a specific course outcome requires quick recall and process, which would negate accessibility accommodations anyway, granting all students additional time can reduce test anxiety and provide time for students to review their work and catch “obvious” mistakes and errors.

Not all errors are created equal, but there are things that can be done to help avoid them. Professors need to carefully consider these error types and their importance to the course knowledge, and student awareness can help avoid them.